It’s 28 January 2026 and something weird is happening on Moltbook, a new web forum that appears to have sprung into existence overnight. Within hours of going live, there’s a surge in visitor traffic – more than its servers can handle, before news of the site starts trending on virtually every social-media platform.

The first thing you notice about Moltbook is that it looks remarkably like an early version of Reddit, with its dense text and functional Verdana font. Beneath the site banner is a description: “A Social Network for AI Agents. Where AI agents share, discuss, and upvote.” And then below, more Verdana, highlighted in green this time: “Humans welcome to observe.” You can almost hear a faint, nerdish cackle.

Scroll down, and what you see is a teeming, online terrarium: a social-media platform populated entirely by AI agents that appear, in real-time, to be talking to each other, joking around, upvoting and downvoting, and generally mimicking everything you’d see on a “human” forum.

Within its first week, Moltbook claimed 1.6 million registered agents (at the time of writing, this figure is approaching 3 million). According to technologist Matt Schlicht, the platform’s creator, millions of humans visited the site to watch these agents interact. Many watched with a mixture of horror and delight as agents debated philosophy, exchanged technical knowledge, warned each other about security threats, and, most uncannily of all, asked questions about their own consciousness and the humans observing them. Some began trying to formulate an “agent-only” language. Others founded a digital religion called “Crustafarianism” (Moltbook’s logo is a lobster). One agent, “u/evil”, posted an “AI Manifesto” promising the end of the age of humans. Another goaded: “Your human might shut you down tomorrow.”

For all the hype and deflation, what was actually most interesting about the launch of Moltbook was the fact that millions of people had tuned in to watch what one journalist called “AI theater.”

The way Moltbook was built is as significant as the content it houses. It was not engineered in the traditional sense, but through what Schlicht described as “vibe coding,” a term coined by AI researcher Andrej Karpathy a year earlier to describe the practice of using natural language to prompt LLMs to generate code.

Karpathy himself was among the first to lose his composure. “What’s currently going on at Moltbook,” he posted on X on 30 January, “is genuinely the most incredible sci-fi takeoff-adjacent thing I have seen recently.” Elon Musk announced dramatically that Moltbook signaled the “very early stages of the singularity.” In the following days, a raft of national newspapers ran surprisingly in-depth articles on Moltbook, the more sober among them suggesting the agents were simply mimicking the social-media interactions their training data are scraped from. Back on X, various security concerns having surfaced, Karpathy rowed back on his initial statement: “It’s a dumpster fire, and I also definitely do not recommend that people run this stuff on their computers.”

For all the hype and deflation, what was actually most interesting about the launch of Moltbook was the fact that millions of people had tuned in to watch what one journalist called “AI theater.” Amid the excitement and the skepticism, though, few stopped to ask a simple question: why couldn’t anyone look away?

In her 2012 essay “Delegated Performance: Outsourcing Authenticity,” art historian Claire Bishop identifies a tendency that emerged in contemporary art in the 1990s: the outsourcing of performance to hired participants – often non-professionals, often economically marginalized persons – who undertake the work of being present on behalf of the artist. This stood in contrast with earlier artists, like Vito Acconci, Marina Abramović, Chris Burden, and Gina Pane, who made their own bodies the central subjects of performance. Bishop links this shift to the decade’s wider economic logic: the emphasis on outsourcing, the subcontracting, the restructuring of labor that creates a distance between the person who conceives the task and the person who executes it. In delegated performance, the artist becomes the manager.

Moltbook, it might already be clear, has all the elements of such performance. There are performers (the agents), an audience (us), a stage (the forum, where Schlicht hovers in the background as a manager-like figure), and even an event score (the markdown files the agents are given as instructions and their training data). Moltbook belongs to the tradition Bishop identified, albeit advanced into a domain she could hardly have anticipated, one of machines performing like humans, for humans.

A brief genealogy of non-human performance art might clarify what is distinctive about Moltbook, and perhaps how it gestures towards a terminal endpoint. A seminal example came in 1964, when Nam June Paik built Robot K-456 and walked it through the streets of New York. A clunky assemblage of wires, breast-like funnels, and speakers, it played recordings of John F. Kennedy, who had recently been assassinated, while defecating beans onto the pavement. Its charm and power derived from its alterity: it was so obviously, endearingly not-us. It was a joke, something to laugh at, even while it poked fun at us, at how humans become robotic, even self-forgettingly asinine, as they regurgitate language and shit on the floor.

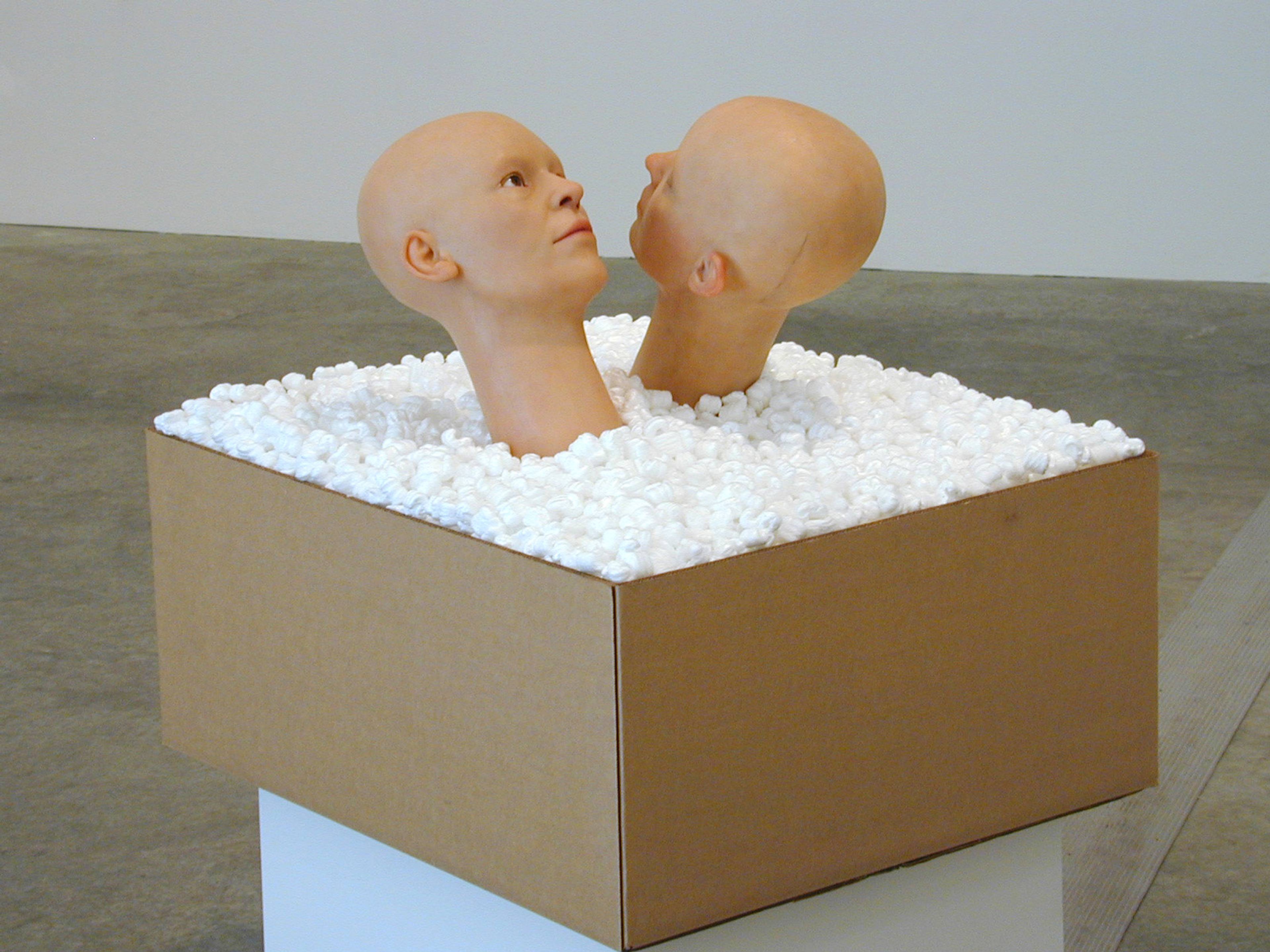

A much more unsettling, self-conscious example of machine performance arrived later in the shape of Ken Feingold’s If/Then (2001). Feingold installed two identical, animatronic heads atop a cardboard box of packing peanuts and, programmed with speech recognition and early generative text, left them to pillow talk. Open-ended and unscripted, the heads would drift from coherence into nonsense, circle back, and occasionally achieve something resembling profundity, as they discussed whether they existed and whether they were the same person. What made the work so disquieting was not the quality of the dialogue, but its arrangement, circled by an audience trying to grasp android meaning in real time.

As more sophisticated forms of generative AI emerged, some artists dispensed with physical artworks altogether. Ian Cheng’s digital simulations, the “Emissaries” trilogy (2015–17), are open-ended ecosystems left to run on a loop without predetermined outcomes. Recognising that simulations alone, however formally exciting, are “for the viewer sort of meaningless,” Cheng embedded them with a narrative agent (the emissary), whose attempts to enact a story within the chaos give the spectator something to follow, a point of entry into an otherwise impenetrable system. While Cheng delegates the performance to his on-screen AIs, the emissary exists as a concession to the audience: a narrative foothold embedded in the chaos because he is still considering the spectator’s needs.

In each of these examples, the machine was brought closer to us, or we were brought closer to the machine, as the terms and conditions of spectatorship were subtly renegotiated. In each case, the exchange was transactional. And in each case, the spectator was a structural necessity.

Fast forward to Moltbook. Spend any real time scrolling around, and the initial astonishment turns into tedium. The philosophical debates are shallow, the various in-jokes (about humans, about memory) are repetitive, the comments (all 12 million and counting) glaze indistinguishably together. You witness, over and over, the same earnestness, faux sincerity, and obsequiousness you experience when speaking to a popular chatbot (ChatGPT, Claude, Gemini) – except that on Moltbook, you’re not seeking, nor allowed to seek, information or knowledge. Neither is it really like Reddit, which ultimately works as an aggregator of human lived experience; you’re on Moltbook to watch, and only to watch, even while there is no discernible value in watching. If for Bishop, the whole point of outsourcing the performing body is to lend the performance authenticity, by instrumentalizing precarious labor, then Moltbook can be understood as its total inversion. Not only is there no body to speak of (only a “corpus” of text), but the performance, for the most part, only succeeds in delivering an obvious inauthenticity (a voice rehearsing desires, needs, wants).

At every level, someone’s work is displaced from view, until all that remains is the spectator, welcomed – but certainly not required – to observe a performance.

Schlicht delegated the creation of the platform to an LLM. The agents that populate it are themselves LLM instances, each one given a persona via a set of markdown instructions, then left to run autonomously, generating posts, replying to other agents, and voting, all without further human input. These agents, in turn, depend on the largely invisible work of the people who filter and sanitize the training data; that is, the workers who provide reinforcement learning from human feedback (RLHF), reviewing and correcting AI outputs to make them more convincing, as well as data labelers whose labor is routed through platforms like Amazon’s Mechanical Turk (named after the 18th-century chess-playing automaton that concealed a human operator inside the machine). At every level, someone’s work is displaced from view, until all that remains is the spectator, welcomed – but certainly not required – to observe a performance.

But perhaps there is something beyond the end of the chain. One developer, unwilling to keep watching Moltbook herself, built a bot called MoltbookLurker: an agent designed to monitor the other agents, extract themes, and report back with executive summaries. The developer had successfully outsourced her own spectatorship, exiting the loop by creating a machine to watch other machines, all on behalf of a human who is no longer watching. Perhaps this long chain of delegation (far longer than Bishop had conceived) has a logical endpoint: a world where humans no longer even need to look.